Cross-cluster Data Migration Tool

The StarRocks Cross-cluster Data Migration Tool is provided by StarRocks Community. You can use this tool to easily migrate data from the source cluster to the target cluster.

| Migration Path | Support information |

|---|---|

| From Shared-nothing to Shared-nothing | From v3.1.8 and v3.2.3 onwards |

| From Shared-nothing to Shared-data | From v3.1.8 and v3.2.3 onwards |

| From Shared-data to Shared-data | From v4.1 onwards |

| From Shared-data to Shared-nothing | Not supported |

Preparations

- Migrate from Shared-nothing

- Migrate between Shared-data

On source cluster

You do not need to perform any preparations on the source cluster.

On target cluster

The following preparations must be performed on the target cluster for data migration.

Open ports

If you have enabled the firewall, you must open these ports:

| Component | Port | Default |

|---|---|---|

| FE | query_port | 9030 |

| FE | http_port | 8030 |

| FE | rpc_port | 9020 |

| BE/CN | be_http_port | 8040 |

| BE/CN | be_port | 9060 |

On source cluster

During migration, the source cluster's Auto-Vacuum mechanism may delete historical data versions that the target CNs still need to read. To prevent this situation, you must extend the Auto-Vacuum grace period by dynamically setting the FE configuration item lake_autovacuum_grace_period_minutes to a significantly large value:

ADMIN SET FRONTEND CONFIG("lake_autovacuum_grace_period_minutes"="10000000");

This setting prevents the source cluster from reclaiming stale object storage files during migration, which will cause storage amplification. It is recommended to keep the migration window as short as possible, and to reset this item to its default value 30 after migration.

ADMIN SET FRONTEND CONFIG("lake_autovacuum_grace_period_minutes"="30");

On target cluster

The following preparations must be performed on the target cluster for data migration.

Open ports

If you have enabled the firewall, you must open these ports:

| Component | Port | Default |

|---|---|---|

| FE | query_port | 9030 |

| FE | http_port | 8030 |

| FE | rpc_port | 9020 |

Disable Compaction

You must disable Compaction on the target cluster during migration to prevent conflicts with incoming replication data.

-

Dynamically disable Compaction:

ADMIN SET FRONTEND CONFIG("lake_compaction_max_tasks"="0"); -

To prevent Compaction from being re-enabled after a cluster restart, also add the following configuration to the FE configuration file fe.conf:

lake_compaction_max_tasks = 0

After migration is complete, re-enable Compaction by removing the above configuration from fe.conf, and enable Compaction dynamically by executing:

ADMIN SET FRONTEND CONFIG("lake_compaction_max_tasks"="-1");

Create source storage volumes on the target cluster

The Migration Tool identifies which storage volume each source table uses, and looks up a corresponding storage volume on the target cluster using the naming convention src_<source_volume_name>. You must pre-create these storage volumes before starting migration.

These storage volumes are used only during migration to give target CNs read access to the source cluster's object storage. After migration is complete, they are no longer needed and can be dropped.

-

On the source cluster, list all storage volumes:

SHOW STORAGE VOLUMES; -

For each storage volume used by the tables you plan to migrate, describe it to get its configuration:

DESCRIBE STORAGE VOLUME <volume_name>;Example output:

+---------------------+------+-----------+-------------------------------+-----------------------------+

| Name | Type | IsDefault | Location | Params |

+---------------------+------+-----------+-------------------------------+-----------------------------+

| builtin_storage_vol | S3 | true | s3://my-bucket | {"aws.s3.region":"...",...} |

+---------------------+------+-----------+-------------------------------+-----------------------------+ -

On the target cluster, create a mirrored storage volume using the same object storage credentials, but with the name prefixed by

src_:CREATE STORAGE VOLUME src_<source_volume_name>

TYPE = S3

LOCATIONS = ("<same_location_as_source>")

PROPERTIES

(

"enabled" = "true",

"aws.s3.region" = "<region>",

"aws.s3.endpoint" = "<endpoint>",

"aws.s3.use_aws_sdk_default_behavior" = "false",

"aws.s3.use_instance_profile" = "false",

"aws.s3.access_key" = "<access_key>",

"aws.s3.secret_key" = "<secret_key>",

"aws.s3.enable_partitioned_prefix" = "false"

);note- Set

aws.s3.enable_partitioned_prefixtofalseregardless of the source cluster's setting. The migration tool reads files using the source partition's full path directly, so partitioned prefix must not be applied to the mirrored volume. - Repeat this step for each unique storage volume used by the tables to be migrated. For example, if the source uses

builtin_storage_volume, createsrc_builtin_storage_volumeon the target cluster. - It is recommended to use temporary credentials (access key / secret key) for the source storage volume. These can be revoked after migration is complete.

- Set

Enable Legacy Compatibility for Replication

StarRocks may behave differently between the old and new versions, causing problems during cross-cluster data migration. Therefore, you must enable Legacy Compatibility for the target cluster before data migration and disable it after data migration is completed.

-

You can check whether Legacy Compatibility for Replication is enabled by using the following statement:

ADMIN SHOW FRONTEND CONFIG LIKE 'enable_legacy_compatibility_for_replication';If

trueis returned, it indicates that Legacy Compatibility for Replication is enabled. -

Dynamically enable Legacy Compatibility for Replication:

ADMIN SET FRONTEND CONFIG("enable_legacy_compatibility_for_replication"="true"); -

To prevent Legacy Compatibility for Replication from automatically disabling during the data migration process in case of cluster restart, you also need to add the following configuration item in the FE configuration file fe.conf:

enable_legacy_compatibility_for_replication = true

After the data migration is completed, you need to remove the configuration enable_legacy_compatibility_for_replication = true from the configuration file, and dynamically disable Legacy Compatibility for Replication using the following statement:

ADMIN SET FRONTEND CONFIG("enable_legacy_compatibility_for_replication"="false");

Configure Data Migration (Optional)

You can configure data migration operations using the following FE and BE parameters. In most cases, the default configuration can meet your needs. If you wish to use the default configuration, you can skip this step.

Please note that increasing the values of the following configuration items can accelerate migration but will also increase the load pressure on the source cluster.

FE Parameters

The following FE parameters are dynamic configuration items. Refer to Configure FE Dynamic Parameters on how to modify them.

| Parameter | Default | Unit | Description |

|---|---|---|---|

| replication_max_parallel_table_count | 100 | - | The maximum number of concurrent data synchronization tasks allowed. StarRocks creates one synchronization task for each table. |

| replication_max_parallel_replica_count | 10240 | - | The maximum number of tablet replica allowed for concurrent synchronization. |

| replication_max_parallel_data_size_mb | 1048576 | MB | The maximum size of data allowed for concurrent synchronization. |

| replication_transaction_timeout_sec | 86400 | Seconds | The timeout duration for synchronization tasks. |

BE Parameters

The following BE parameter is a dynamic configuration item. Refer to Configure BE Dynamic Parameters on how to modify it.

| Parameter | Default | Unit | Description |

|---|---|---|---|

| replication_threads | 0 | - | The number of threads for executing synchronization tasks. 0 indicates setting the number of threads to the 4 times of number of CPU cores on the machine where the BE resides. |

Step 1: Install the Tool

It is recommended to install the migration tool on the server where the target cluster resides.

-

Launch a terminal, and download the binary package of the tool.

wget https://releases.starrocks.io/starrocks/starrocks-cluster-sync.tar.gz -

Decompress the package.

tar -xvzf starrocks-cluster-sync.tar.gz

Step 2: Configure the Tool

Migration-related configuration

Navigate to the extracted folder and modify the configuration file conf/sync.properties.

cd starrocks-cluster-sync

vi conf/sync.properties

The file content is as follows:

# If true, all tables will be synchronized only once, and the program will exit automatically after completion.

one_time_run_mode=false

source_fe_host=

source_fe_query_port=9030

source_cluster_user=root

source_cluster_password=

source_cluster_password_secret_key=

# You can leave this empty or omit it if you want to migrate data between shared-data source clusters.

source_cluster_token=

target_fe_host=

target_fe_query_port=9030

target_cluster_user=root

target_cluster_password=

target_cluster_password_secret_key=

jdbc_connect_timeout_ms=30000

jdbc_socket_timeout_ms=60000

# Comma-separated list of database names or table names like <db_name> or <db_name.table_name>

# example: db1,db2.tbl2,db3

# Effective order: 1. include 2. exclude

include_data_list=

exclude_data_list=

# If there are no special requirements, please maintain the default values for the following configurations.

target_cluster_storage_volume=

# This configuration item is for migration between shared-data clusters only.

target_cluster_use_builtin_storage_volume_only=false

target_cluster_replication_num=-1

target_cluster_max_disk_used_percent=80

# To maintain consistency with the source cluster, use null.

target_cluster_enable_persistent_index=

max_replication_data_size_per_job_in_gb=1024

meta_job_interval_seconds=180

meta_job_threads=4

ddl_job_interval_seconds=10

ddl_job_batch_size=10

# table config

ddl_job_allow_drop_target_only=false

ddl_job_allow_drop_schema_change_table=true

ddl_job_allow_drop_inconsistent_partition=true

ddl_job_allow_drop_inconsistent_time_partition = true

ddl_job_allow_drop_partition_target_only=true

# index config

enable_bitmap_index_sync=false

ddl_job_allow_drop_inconsistent_bitmap_index=true

ddl_job_allow_drop_bitmap_index_target_only=true

# MV config

enable_materialized_view_sync=false

ddl_job_allow_drop_inconsistent_materialized_view=true

ddl_job_allow_drop_materialized_view_target_only=false

# View config

enable_view_sync=false

ddl_job_allow_drop_inconsistent_view=true

ddl_job_allow_drop_view_target_only=false

replication_job_interval_seconds=10

replication_job_batch_size=10

report_interval_seconds=300

enable_table_property_sync=false

The description of the parameters is as follows:

| Parameter | Description |

|---|---|

| one_time_run_mode | Whether to enable one-time synchronization mode. When one-time synchronization mode is enabled, the migration tool only performs full synchronization instead of incremental synchronization. |

| source_fe_host | The IP address or FQDN (Fully Qualified Domain Name) of the source cluster's FE. |

| source_fe_query_port | The query port (query_port) of the source cluster's FE. |

| source_cluster_user | The username used to log in to the source cluster. This user must be granted the OPERATE privilege on the SYSTEM level. |

| source_cluster_password | The user password used to log in to the source cluster. |

| source_cluster_password_secret_key | The secret key used to encrypt the password of the login user for the source cluster. The default value is an empty string, which means that the login password is not encrypted. If you want to encrypt source_cluster_password, you can get the encrypted source_cluster_password string by using SQL statement SELECT TO_BASE64(AES_ENCRYPT('<source_cluster_password>','<source_cluster_password_ secret_key>')). |

| source_cluster_token | Token of the source cluster. For information on how to obtain the cluster token, refer to Obtain Cluster Token below. NOTE The Cluster Token is not required for migration between shared-data clusters because files are read directly from object storage. You can leave this empty or omit it if you want to migrate data between shared-data source clusters. |

| target_fe_host | The IP address or FQDN (Fully Qualified Domain Name) of the target cluster's FE. |

| target_fe_query_port | The query port (query_port) of the target cluster's FE. |

| target_cluster_user | The username used to log in to the target cluster. This user must be granted the OPERATE privilege on the SYSTEM level. |

| target_cluster_password | The user password used to log in to the target cluster. |

| target_cluster_password_secret_key | The secret key used to encrypt the password of the login user for the target cluster. The default value is an empty string, which means that the login password is not encrypted. If you want to encrypt target_cluster_password, you can get the encrypted target_cluster_password string by using SQL statement SELECT TO_BASE64(AES_ENCRYPT('<target_cluster_password>','<target_cluster_password_ secret_key>')). |

| jdbc_connect_timeout_ms | JDBC connection timeout in milliseconds for FE queries. Default: 30000. |

| jdbc_socket_timeout_ms | JDBC socket timeout in milliseconds for FE queries. Default: 60000. |

| include_data_list | The databases and tables that need to be migrated, with multiple objects separated by commas (,). For example: db1, db2.tbl2, db3. This item takes effect prior to exclude_data_list. If you want to migrate all databases and tables in the cluster, you do not need to configure this item. |

| exclude_data_list | The databases and tables that do not need to be migrated, with multiple objects separated by commas (,). For example: db1, db2.tbl2, db3. include_data_list takes effect prior to this item. If you want to migrate all databases and tables in the cluster, you do not need to configure this item. |

| target_cluster_storage_volume | The storage volume used to store tables in the target cluster when the target cluster is a shared-data cluster. If you want to use the default storage volume, you do not need to specify this item. |

| target_cluster_use_builtin_storage_volume_only | Whether to use builtin_storage_volume for migration in the target cluster. It is required for migration between shared-data clusters only. When this item is set to true, tables created in the target cluster will use builtin_storage_volume uniformly, instead of using the source cluster's storage_volume configuration. This is useful when the source cluster has multiple custom storage volumes but you want to consolidate all tables under one storage volume in the target cluster. |

| target_cluster_replication_num | The number of replicas specified when creating tables in the target cluster. If you want to use the same replica number as the source cluster, you do not need to specify this item. |

| target_cluster_max_disk_used_percent | Disk usage percentage threshold for BE nodes of the target cluster when the target cluster is shared-nothing. Migration is terminated when the disk usage of any BE in the target cluster exceeds this threshold. The default value is 80, which means 80%. |

| meta_job_interval_seconds | The interval, in seconds, at which the migration tool retrieves metadata from the source and target clusters. You can use the default value for this item. |

| meta_job_threads | The number of threads used by the migration tool to obtain metadata from the source and target clusters. You can use the default value for this item. |

| ddl_job_interval_seconds | The interval, in seconds, at which the migration tool executes DDL statements on the target cluster. You can use the default value for this item. |

| ddl_job_batch_size | The batch size for executing DDL statements on the target cluster. You can use the default value for this item. |

| ddl_job_allow_drop_target_only | Whether to allow the migration tool to delete databases or tables that exist only in the target cluster but not in the source cluster. The default is false, which means they will not be deleted. You can use the default value for this item. |

| ddl_job_allow_drop_schema_change_table | Whether to allow the migration tool to delete tables with inconsistent schemas between the source and target clusters. The default is true, meaning they will be deleted. You can use the default value for this item. The migration tool will automatically synchronize the deleted tables during the migration. |

| ddl_job_allow_drop_inconsistent_partition | Whether to allow the migration tool to delete partitions with inconsistent data distribution between the source and target clusters. The default is true, meaning they will be deleted. You can use the default value for this item. The migration tool will automatically synchronize the deleted partitions during the migration. |

| ddl_job_allow_drop_partition_target_only | Whether to allow the migration tool to delete partitions that are deleted in the source cluster to keep the partitions consistent between the source and target clusters. The default is true, meaning they will be deleted. You can use the default value for this item. |

| replication_job_interval_seconds | The interval, in seconds, at which the migration tool triggers data synchronization tasks. You can use the default value for this item. |

| replication_job_batch_size | The batch size at which the migration tool triggers data synchronization tasks. You can use the default value for this item. |

| max_replication_data_size_per_job_in_gb | The data size threshold at which the migration tool triggers data synchronization tasks. Unit: GB. Multiple data synchronization tasks will be triggered if the size of the partition to be migrated exceed this value. The default value is 1024. You can use the default value for this item. |

| report_interval_seconds | The time interval at which the migration tool prints the progress information. Unit: Seconds. Default value: 300. You can use the default value for this item. |

| target_cluster_enable_persistent_index | Whether to enable persistent index the in the target cluster. If this item is not specified, the target cluster is consistent with the source cluster. NOTE When migrating data between two shared-data clusters, the tool automatically converts persistent_index_type = LOCAL to CLOUD_NATIVE in the CREATE TABLE statement for Primary Key tables. No manual action is needed. |

| ddl_job_allow_drop_inconsistent_time_partition | Whether to allow the migration tool to delete partitions with inconsistent time between the source and target clusters. The default is true, meaning they will be deleted. You can use the default value for this item. The migration tool will automatically synchronize the deleted partitions during the migration. |

| enable_bitmap_index_sync | Whether to enable synchronization for Bitmap indexes. |

| ddl_job_allow_drop_inconsistent_bitmap_index | Whether to allow the migration tool to delete inconsistent Bitmap indexes between the source and target clusters. The default is true, meaning they will be deleted. You can use the default value for this item. The migration tool will automatically synchronize the deleted indexes during the migration. |

| ddl_job_allow_drop_bitmap_index_target_only | Whether to allow the migration tool to delete Bitmap indexes that are deleted in the source cluster to keep the indexes consistent between the source and target clusters. The default is true, meaning they will be deleted. You can use the default value for this item. |

| enable_materialized_view_sync | Whether to enable synchronization for materialized views. |

| ddl_job_allow_drop_inconsistent_materialized_view | Whether to allow the migration tool to delete inconsistent materialized views between the source and target clusters. The default is true, meaning they will be deleted. You can use the default value for this item. The migration tool will automatically synchronize the deleted materialized views during the migration. |

| ddl_job_allow_drop_materialized_view_target_only | Whether to allow the migration tool to delete materialized views that are deleted in the source cluster to keep the materialized views consistent between the source and target clusters. The default is true, meaning they will be deleted. You can use the default value for this item. |

| enable_view_sync | Whether to enable synchronization for views. |

| ddl_job_allow_drop_inconsistent_view | Whether to allow the migration tool to delete inconsistent views between the source and target clusters. The default is true, meaning they will be deleted. You can use the default value for this item. The migration tool will automatically synchronize the deleted views during the migration. |

| ddl_job_allow_drop_view_target_only | Whether to allow the migration tool to delete views that are deleted in the source cluster to keep the views consistent between the source and target clusters. The default is true, meaning they will be deleted. You can use the default value for this item. |

| enable_table_property_sync | Whether to enable synchronization for table properties. |

- Migrate from Shared-nothing

- Migrate between Shared-data

Obtain Cluster Token

The Cluster Token is not required for migration between shared-data clusters. You can skip this step if you want to migrate data between shared-data source clusters.

The Cluster Token is available in the FE metadata. Log in to the server where the FE node is located and run the following command:

cat fe/meta/image/VERSION | grep token

Output:

token=wwwwwwww-xxxx-yyyy-zzzz-uuuuuuuuuu

Map storage volumes

When the migration tool creates a table on the target cluster, it determines the table's storage volume as follows (in order of precedence):

- If

target_cluster_use_builtin_storage_volume_onlyis set totrue,builtin_storage_volumeis used for all tables. - If

target_cluster_storage_volumeis set to a specific storage volume, the specified storage volume is used for all tables. - Otherwise, by default, the source table's storage volume property is reserved. Tables from different source storage volumes are created under the corresponding storage volumes on the target cluster, provided those storage volumes exist on the target.

Therefore, if you want to keep the storage volume property for each table in the source cluster, you can pre-create the same storage volumes in the target cluster. After migration, each table in the target cluster will inherit the storage volume property it had in the source cluster.

Note that the src_-prefixed storage volumes serve a different purpose: they are used only during migration to give target CNs read access to the source cluster's object storage. After migration is complete, the src_<name> volumes are no longer needed and can be dropped.

Network-related configuration (Optional)

- Migrate from Shared-nothing

- Migrate between Shared-data

During data migration, the migration tool needs to access all FE nodes of both the source and target clusters, and the target cluster needs to access all BE and CN nodes of the source cluster.

You can obtain the network addresses of these nodes by executing the following statements on the corresponding cluster:

-- Obtain the network addresses of FE nodes in a cluster.

SHOW FRONTENDS;

-- Obtain the network addresses of BE nodes in a cluster.

SHOW BACKENDS;

-- Obtain the network addresses of CN nodes in a cluster.

SHOW COMPUTE NODES;

If these nodes use private addresses that cannot be accessed outside the cluster, such as internal network addresses within a Kubernetes cluster, you need to map these private addresses to addresses that can be accessed from outside.

Navigate to the extracted folder of the tool and modify the configuration file conf/hosts.properties.

cd starrocks-cluster-sync

vi conf/hosts.properties

The default content of the file is as follows, describing how network address mapping is configured:

# <SOURCE/TARGET>_<host>=<mappedHost>[;<srcPort>:<dstPort>[,<srcPort>:<dstPort>...]]

The <host> must match the address shown in the IP column returned by SHOW FRONTENDS, SHOW BACKENDS, or SHOW COMPUTE NODES.

The following example performs these operations:

- Map the source cluster's private network addresses

192.1.1.1and192.1.1.2to10.1.1.1and10.1.1.2. - Map the source cluster's FE ports

8030and9030to38030and39030on10.1.1.1. - Map the target cluster's private network address

fe-0.starrocks.svc.cluster.localto10.1.2.1and remap port9030.

# <SOURCE/TARGET>_<host>=<mappedHost>[;<srcPort>:<dstPort>[,<srcPort>:<dstPort>...]]

SOURCE_192.1.1.1=10.1.1.1;8030:38030,9030:39030

SOURCE_192.1.1.2=10.1.1.2

TARGET_fe-0.starrocks.svc.cluster.local=10.1.2.1;9030:19030

During data migration, the migration tool needs to access all FE nodes of both the source and target clusters.

Unlike migration from shared-nothing clusters, you do not need to configure network access from the target cluster to the source cluster's CN nodes, because data is transferred directly between object storage systems.

You can obtain the FE network addresses by executing the following statement on the corresponding cluster:

-- FE nodes

SHOW FRONTENDS;

If FE nodes use private addresses that cannot be accessed outside the cluster, such as internal network addresses within a Kubernetes cluster, you need to map these private addresses to addresses that can be accessed from outside.

Navigate to the extracted folder of the tool and modify the configuration file conf/hosts.properties.

cd starrocks-cluster-sync

vi conf/hosts.properties

The default content of the file is as follows, describing how network address mapping is configured:

# <SOURCE/TARGET>_<host>=<mappedHost>[;<srcPort>:<dstPort>[,<srcPort>:<dstPort>...]]

The <host> must match the address shown in the IP column returned by SHOW FRONTENDS.

The following example maps the target cluster's internal Kubernetes FQDN to a reachable IP:

TARGET_frontend-0.frontend.mynamespace.svc.cluster.local=10.1.2.1;9030:19030

Step 3: Start the Migration Tool

After configuring the tool, start the migration tool to initiate the data migration process.

./bin/start.sh

- If you are migrating data from a shared-nothing cluster, make sure that the BE nodes of the source and target clusters can properly communicate via the network.

- During runtime, the migration tool regularly checks whether the data in the target cluster is lagging behind the source cluster. If there is a lag, it initiates data migration tasks.

- If new data is constantly loaded into the source cluster, data synchronization will continue until the data in the target cluster is consistent with that in the source cluster.

- You can query tables in the target cluster during migration, but do not load new data into the tables, as it may result in inconsistencies between the data in the target cluster and the source cluster. Currently, the migration tool does not forbid data loading into the target cluster during migration.

- Note that data migration does not automatically terminate. You need to manually check and confirm the completion of migration and then stop the migration tool.

View Migration Progress

View Migration Tool logs

You can check the migration progress through the migration tool log log/sync.INFO.log.

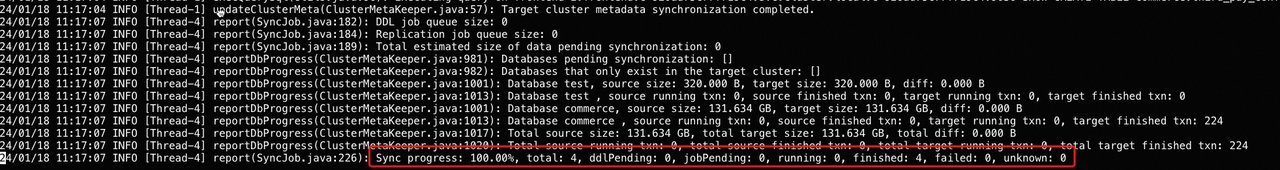

Example 1: View task progress.

The important metrics are as follows:

Sync job progress: The progress of data migration. The migration tool regularly checks whether the data in the target cluster is lagging behind the source cluster. Therefore, a progress of 100% only means that the data synchronization is completed within the current check interval. If new data continues to be loaded into the source cluster, the progress may decrease in the next check interval.total: The total number of all types of jobs in this migration operation.ddlPending: The number of DDL jobs pending to be executed.jobPending: The number of pending data synchronization jobs to be executed.sent: The number of data synchronization jobs sent from the source cluster but not yet started. Theoretically, this value should not be too large. If the value keeps increasing, please contact our engineers.running: The number of data synchronization jobs that are currently running.finished: The number of data synchronization jobs that are finished.failed: The number of failed data synchronization jobs. Failed data synchronization jobs will be resent. Therefore, in most cases, you can ignore this metric. If this value is significantly large, please contact our engineers.unknown: The number of jobs with an unknown status. Theoretically, this value should always be0. If this value is not0, please contact our engineers.

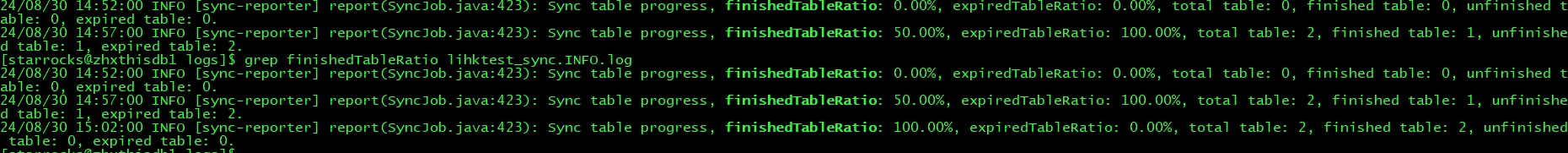

Example 2: View the table migration progress.

Sync table progress: Table migration progress, that is, the ratio of tables that have been migrated in this migration task to all the tables that need to be migrated.finishedTableRatio: Ratio of tables with at least one successful synchronization task execution.expiredTableRatio: Ratio of tables with expired data.total table: Total number of tables involved in this data migration progress.finished table: Number of tables with at least one successful synchronization task execution.unfinished table: Number of tables with no synchronization task execution.expired table: Number of tables with expired data.

View Migration Transaction Status

The migration tool opens a transaction for each table. You can view the status of the migration for a table by checking the status of its corresponding transaction.

SHOW PROC "/transactions/<db_name>/running";

<db_name> is the name of the database where the table is located.

View Partition Data Versions

You can compare the data versions of the corresponding partitions in the source and target clusters to view the migration status of that partition.

SHOW PARTITIONS FROM <table_name>;

<table_name> is the name of the table to which the partition belongs.

View Data Volume

You can compare the data volumes in the source and target clusters to view the migration status.

SHOW DATA;

View Table Row Count

You can compare the row counts of tables in the source and target clusters to view the migration status of each table.

SELECT

TABLE_NAME,

TABLE_ROWS

FROM INFORMATION_SCHEMA.TABLES

WHERE TABLE_TYPE = 'BASE TABLE'

ORDER BY TABLE_NAME;

After Migration

When Sync job progress has been stable at 100% and your business is ready to switch, complete the cutover as follows:

-

Stop writes to the source cluster.

-

Verify

Sync job progressreaches and remains at 100% after writes stop. -

Stop the migration tool.

-

Point your applications to the target cluster address.

-

If you migrated data between shared-data clusters, restore the Auto-Vacuum setting on the source cluster:

ADMIN SET FRONTEND CONFIG("lake_autovacuum_grace_period_minutes"="30"); -

If you migrated data between shared-data clusters, re-enable Compaction on the target cluster. Remove

lake_compaction_max_tasks = 0from fe.conf and execute:ADMIN SET FRONTEND CONFIG("lake_compaction_max_tasks"="-1"); -

Disable Legacy Compatibility for Replication on the target cluster. Remove

enable_legacy_compatibility_for_replication = truefrom fe.conf and execute:ADMIN SET FRONTEND CONFIG("enable_legacy_compatibility_for_replication"="false");

Limits

The list of objects that support synchronization currently is as follows (those not included indicate that synchronization is not supported):

- Databases

- Internal tables and their data

- Materialized view schemas and their building statements (The data in the materialized view will not be synchronized. And if the base tables of the materialized view is not synchronized to the target cluster, the background refresh task of the materialized view reports an error.)

- Logical views

For migration between shared-data clusters:

- The target cluster must be running on v4.1 or later.

- Migration from a shared-data cluster to a shared-nothing target is not supported.

- Each storage volume used by the source cluster's tables must have a corresponding

src_<volume_name>storage volume pre-created on the target cluster.